Evaluations

An Evaluation runs an agent against all test cases in a dataset, applies the configured checks to each response, and produces a per-test-case result with a pass/fail verdict. You can also run and review evaluations from the Hub UI evaluations page.

Remote evaluations

Section titled “Remote evaluations”A remote evaluation calls your registered agent’s HTTP endpoint for every test case in the dataset.

from giskard_hub import HubClient

hub = HubClient()

evaluation = hub.evaluations.create( name="v2.1 regression run", project_id="project-id", agent_id="agent-id", dataset_id="dataset-id",)

print(evaluation.id)

# Wait for completionevaluation = hub.helpers.wait_for_completion(evaluation)

print(f"Evaluation completed with state: {evaluation.state}")Alternatively, you can run a remote evaluation using the convenient helper method:

evaluation = hub.helpers.evaluate( name="v2.2. regression run", project=my_project, # giskard_hub.types.Project or str dataset=my_dataset, # giskard_hub.types.Dataset or str agent=my_agent, # giskard_hub.types.Agent or str)Filter by tags

Section titled “Filter by tags”Run the evaluation only against test cases with specific tags:

evaluation = hub.evaluations.create( name="Shipping-only run", project_id="project-id", agent_id="agent-id", dataset_id="dataset-id", tags=["shipping"],)Run multiple times

Section titled “Run multiple times”Set run_count to run each test case multiple times (useful for measuring consistency across stochastic outputs):

evaluation = hub.evaluations.create( name="Consistency check — 3x", project_id="project-id", agent_id="agent-id", dataset_id="dataset-id", run_count=3,)Local evaluations

Section titled “Local evaluations”A local evaluation lets you run inference using a Python function in your process rather than an HTTP endpoint. This is ideal for evaluating models during development without exposing an API.

Simply pass your callable as the agent parameter; this will automatically run a local evaluation.

from giskard_hub.types import ChatMessage, AgentOutput

def my_agent(messages: list[ChatMessage]) -> str | ChatMessage | AgentOutput: # Call your local model or chain here user_input = messages[-1].content

return ChatMessage( role="assistant", content=f"Echo: {user_input}", # replace with real inference )

evaluation = hub.helpers.evaluate( dataset="dataset-id", agent=my_agent, name="Local evaluation",)Inspect results

Section titled “Inspect results”List all results

Section titled “List all results”results = hub.evaluations.results.list("evaluation-id")

for result in results: print(f"{result.id}: {result.state}") for check in result.results: verdict = "✓" if check.passed else "✗" print(f" {verdict} {check.name}")You can also use the helper to print a formatted summary of all metrics for an evaluation:

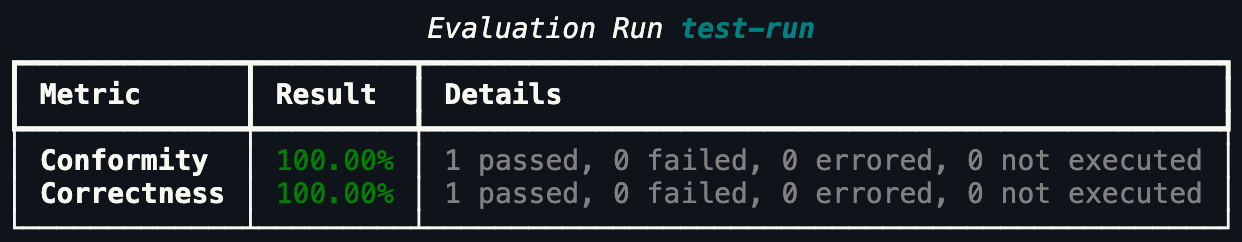

hub.helpers.print_metrics(evaluation)The output is a rich terminal table showing per-check pass rates:

Search and filter results

Section titled “Search and filter results”results_search = hub.evaluations.results.search( "evaluation-id", filters={"sample_success": {"selected_options": ["fail"]}}, limit=50,)Retrieve a single result

Section titled “Retrieve a single result”result = hub.evaluations.results.retrieve( "result-id", evaluation_id="evaluation-id",)

print(result.state)Update the failure category of result (manual review)

Section titled “Update the failure category of result (manual review)”The full list of available failure categories for a project can be retrieved via hub.projects.retrieve("project-id").failure_categories.

hub.evaluations.results.update( "result-id", evaluation_id="evaluation-id", failure_category={ "identifier": "contradiction", "title": "Contradiction", "description": "The agent incorrectly provides an answer that contradicts the information given in the context (for groundedness checks) or in the reference (for correctness checks).", },)Control result visibility

Section titled “Control result visibility”You can hide individual results from the default view (for example, noisy outliers):

hub.evaluations.results.update_visibility( "result-id", evaluation_id="evaluation-id", hidden=True,)Access aggregated metrics

Section titled “Access aggregated metrics”After an evaluation completes, access the per-check aggregated metrics programmatically:

for metric in evaluation.metrics: print( f"{metric.name}: {metric.success_rate * 100:.1f}% " f"({metric.passed} passed, {metric.failed} failed, {metric.errored} errored)" )Each Metric object has the following fields:

| Field | Type | Description |

|---|---|---|

name | str | Check identifier (e.g. "correctness", "global") |

display_name | str | Human-readable name |

passed | int | Number of test cases that passed |

failed | int | Number of test cases that failed |

errored | int | Number of test cases that errored |

total | int | Total number of test cases |

success_rate | float | Pass rate as a float between 0.0 and 1.0 |

The special "global" metric aggregates across all checks.

Rerun errored results

Section titled “Rerun errored results”If some test cases failed due to transient agent errors (timeouts, 5xx responses), rerun only the errored ones without triggering a full re-evaluation:

hub.evaluations.rerun_errored_results("evaluation-id")Rerun a single specific result:

hub.evaluations.results.rerun_test_case( "result-id", evaluation_id="evaluation-id")CI/CD integration

Section titled “CI/CD integration”Use evaluations as a quality gate in your CI/CD pipeline. Exit with a non-zero code if any metric falls below your threshold:

import osimport sysfrom giskard_hub import HubClient

hub = HubClient()

evaluation = hub.evaluations.create( name=f"CI run — {os.environ.get('CI_COMMIT_SHA', 'local')}", project_id="project-id", agent_id="agent-id", dataset_id="dataset-id",)

try: evaluation = hub.helpers.wait_for_completion(evaluation)except Exception as e: print("Evaluation encountered errors.") sys.exit(1)

global_metrics = [m for m in evaluation.metrics if m.name == "global"][0]pass_rate = global_metrics.success_rate * 100

print( f"Pass rate: {pass_rate:.2f}% ({global_metrics.passed}/{global_metrics.total})")

THRESHOLD = 90.0if pass_rate < THRESHOLD: print(f"Quality gate failed: pass rate {pass_rate:.1f}% < {THRESHOLD}%") sys.exit(1)

print("Quality gate passed.")Run a single test case ad hoc

Section titled “Run a single test case ad hoc”You can evaluate a single (input, output) pair against a set of checks without running a full evaluation. This is useful for debugging or CI gates on individual responses:

from giskard_hub.types import ChatMessage

results = hub.evaluations.run_single( project_id="project-id", agent_output={ "response": ChatMessage( role="assistant", content="You can return anything within 30 days." ) }, messages=[{"role": "user", "content": "What is your return policy?"}], checks=[ {"identifier": "tone_professional"}, ],)

for check in results: print(f"{check.name}: {'passed' if check.passed else 'failed'}")List and manage evaluations

Section titled “List and manage evaluations”evaluations = hub.evaluations.list(project_id="project-id")

hub.evaluations.update("evaluation-id", name="Renamed evaluation")

hub.evaluations.delete("evaluation-id")Scheduled evaluations

Section titled “Scheduled evaluations”Scheduled Evaluations automatically run an evaluation on a regular cadence (daily, weekly, or monthly). They’re the foundation of continuous quality monitoring: set them up once and the Hub will run them automatically, so you catch regressions without any manual effort.

Create a scheduled evaluation

Section titled “Create a scheduled evaluation”schedule = hub.scheduled_evaluations.create( project_id="project-id", agent_id="agent-id", dataset_id="dataset-id", name="Weekly regression check", frequency="weekly", time="09:00", # UTC time of day day_of_week=1, # 1 = Monday, 7 = Sunday)

print(f"Scheduled evaluation created: {schedule.id}")Frequency options

Section titled “Frequency options”frequency | Description | Required extra params |

|---|---|---|

"daily" | Runs every day at the specified time | time |

"weekly" | Runs once a week | time, day_of_week (1–7) |

"monthly" | Runs once a month | time, day_of_month (1–28) |

# Daily at 06:00 UTChub.scheduled_evaluations.create( project_id="project-id", agent_id="agent-id", dataset_id="dataset-id", name="Daily smoke test", frequency="daily", time="06:00",)

# Monthly on the 1st at 08:00 UTChub.scheduled_evaluations.create( project_id="project-id", agent_id="agent-id", dataset_id="dataset-id", name="Monthly full regression", frequency="monthly", time="08:00", day_of_month=1,)List scheduled evaluations

Section titled “List scheduled evaluations”schedules = hub.scheduled_evaluations.list(project_id="project-id")

for s in schedules: print(f"{s.name} — {s.frequency} — last execution: {s.last_execution_at}")Retrieve a schedule with its recent runs

Section titled “Retrieve a schedule with its recent runs”scheduled_evaluation = hub.scheduled_evaluations.retrieve( "scheduled-evaluation-id", include=["evaluations"],)

print(f"Schedule: {scheduled_evaluation.name}")for evaluation in scheduled_evaluation.evaluations: print( f" Run {evaluation.id}: {evaluation.state} at {evaluation.created_at}" )List past evaluation runs

Section titled “List past evaluation runs”evaluation_runs = hub.scheduled_evaluations.list_evaluations( "scheduled-evaluation-id",)

for run in evaluation_runs: print(f"Run: {run.id} — {run.state} — {run.created_at}")Update and delete scheduled evaluations

Section titled “Update and delete scheduled evaluations”hub.scheduled_evaluations.update( "scheduled-evaluation-id", name="Updated schedule name", frequency="daily", time="07:30",)

hub.scheduled_evaluations.delete("scheduled-evaluation-id")