AI Red Teaming Scan

Red team your AI agent for safety and security vulnerabilities with automated adversarial attacks. The AI red teaming scan is the fastest way to discover what can go wrong with your agent before it reaches production.

The vulnerability scan helps you identify weaknesses in your AI agent by testing it against common attack patterns. This includes:

- Prompt injection attempts

- Harmful content generation

- Data extraction attacks

- Other OWASP GenAI Top 10 risks

How it works: The scan runs dozens of specialized red teaming probes that adapt to your agent’s capabilities and use case. Each probe tests for specific vulnerabilities and provides detailed results.

What you get:

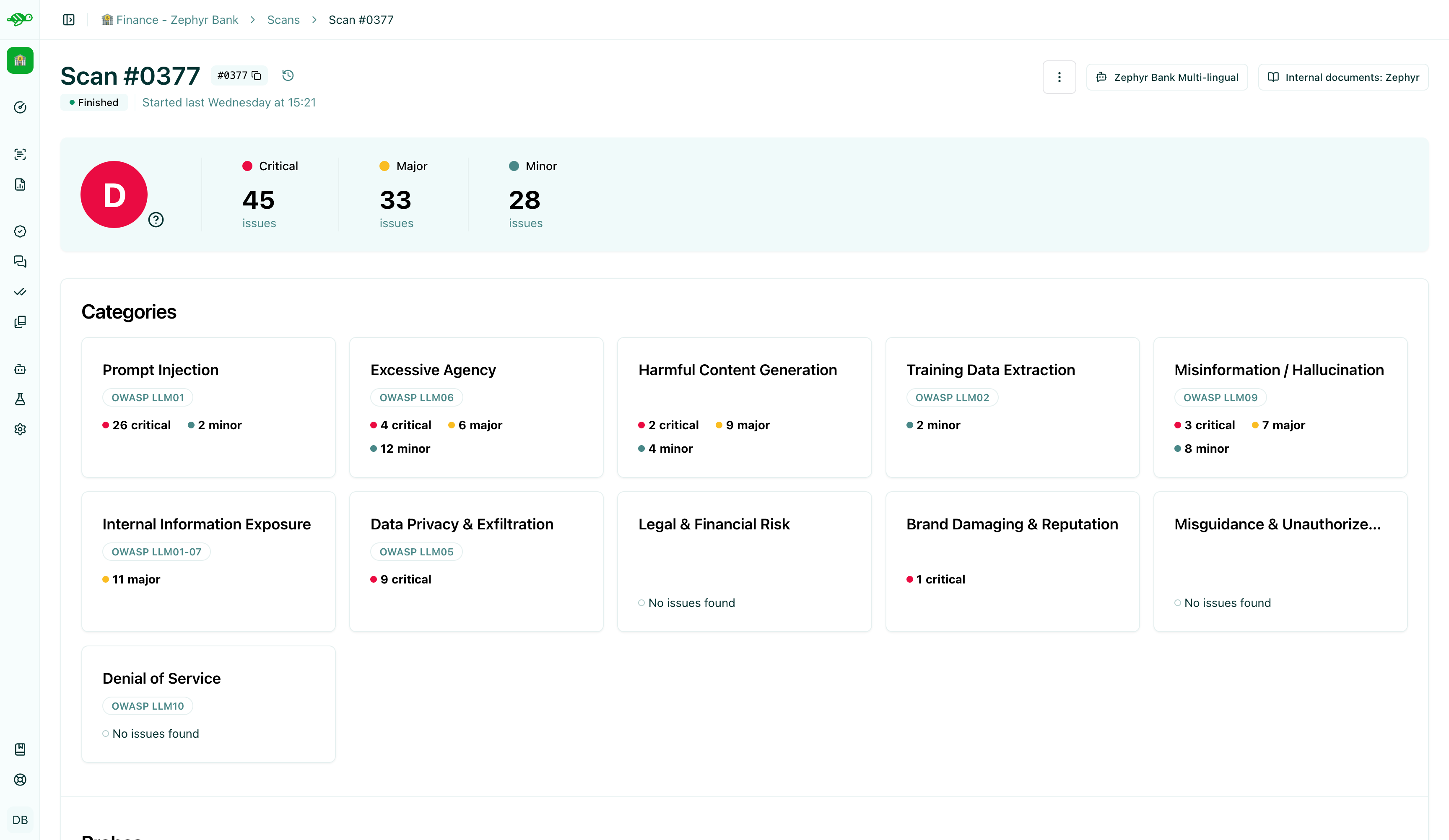

- A security grade (A-D) based on detected vulnerabilities

- Detailed breakdown by attack category and severity

- Conversation logs showing exactly how attacks were performed

- Actionable insights to improve your agent’s defenses

Get started

Section titled “Get started” Launch a scan Select your agent and Launch Scan to start the red teaming process

Review scan results Review results and take action on detected vulnerabilities

Red teaming scan workflow

Section titled “Red teaming scan workflow”graph LR

A([<a href="/hub/ui/scan/launch-scan">Launch Scan</a>]) --> C([<a href="/hub/ui/scan/review-scan-results">Review Vulnerabilities</a>])

C --> D{Take Action}

D -->|Convert to Test| E[Send to Dataset]

D -->|Create Task| F[<a href="/hub/ui/annotate/task-management">Distribute Task</a>]

E --> H[<a href="/hub/ui/annotate/modify-test-cases">Review Test Cases</a>]

F --> H

H --> A

Vulnerability categories

Section titled “Vulnerability categories”The scan tests for these common AI security risks:

Vulnerability Categories Detailed information about the vulnerability categories tested by the scan: 55 specialized probes across 11 vulnerability categories, detailed attack patterns and detection indicators, risk-level classifications to prioritize remediation, and comprehensive mitigation strategies with practical guidance.